Airflow also offers easy extensibility through its plug-in framework. Airflow offers a wide range of integrations for services ranging from Spark and HBase to services on various cloud providers. Since its inception, Airflow’s greatest strength has been its flexibility. You can define dependencies, programmatically construct complex workflows, and monitor scheduled jobs in an easy to read UI. What Is Airflow?Īpache Airflow is one realization of the DevOps philosophy of “Configuration As Code.” Airflow allows users to launch multi-step pipelines using a simple Python object DAG (Directed Acyclic Graph). part of Bloomberg’s continued commitment to developing the Kubernetes ecosystem, we are excited to announce the Kubernetes Airflow Operator, a mechanism for Apache Airflow, a popular workflow orchestration framework to natively launch arbitrary Kubernetes Pods using the Kubernetes API. To communicate with a Cloud Sql database from a cluster, a proxy pod, available as a postgres service is used: kind: Deployment apiVersion: apps/v1 metadata: name: postgres spec: replicas: 1 selector: matchLabels: tier: postgres template: metadata: labels: app: airflow tier: postgres spec: serviceAccountName: worker restartPolicy: Always containers: - name: cloudsql-proxy image: gcr.io/cloudsql-docker/gce-proxy:1.11 command: ["/cloud_sql_proxy", "-instances=. In Google Cloud, a popular choice is a Cloud Sql - managed, highly available database instances from Google. set projectId=google_project_idįor persistence, Airflow needs a database. # then, after cluster is created and configured, deploy complete application using Helm: # add libraries and third party packages cd srcĭocker tag airflow-gke gcr.io/google_project_id/airflow-gke:latestĭocker push gcr.io/google_project_id/airflow-gke

Secondly, an image containing DAGs is built in root directory (this image may change more frequently as DAGs could be added, tweaked, or even generated on the fly). First, an image containing additional packages and library code is built in src directory (this image supposedly should not change too frequently). To gain more flexibility the Docker image is built in two steps. For this reason, DAGs, as well as additional Python packages are baked into Docker container image, which is built on top of puckel/airflow image. Using git sync to deliver Airflow DAGs is common practice, which to my taste looks like too much flexibility, opening the door to hard to debug inconsistencies. Here no Persistent Volumes were created, but we can do it in case we want to save/archive logs (alternatively, Airflow could be configured to save logs in Google Cloud storage, Elastic Search, etc) or cache some static data. In our case Headless Service just gives pods distinct networking identity (worker-0, worker-1, etc) and simplifies local logs access. Headless service could be used when cluster IP and load balancing between pods is not necessary (only the list of pod IPs is of the interest). For this purpose, Stateful sets were used in combination with Headless Service for workers (notice clusterIP is None): apiVersion: v1 kind: Service metadata: name: worker spec: clusterIP: None selector: app: airflow tier: worker ports: - protocol: TCP port: 8793 To run Airflow, a few pods should be scheduled, most notably - scheduler, webserver, and worker pods.

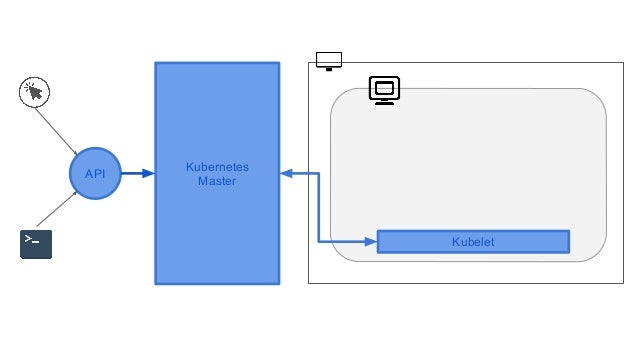

As Airflow is quite a complex platform, installation involves a lot of work on infrastructure: cluster should be created, Cloud Sql should be provisioned, SSD disk for Redis created, necessary Google APIs enabled, etc. To deploy to Kubernetes I use a Helm chart. In this post I describe how to install and configure Apache Airflow components on GKE: Celery Executer, Redis and Cloud Sql. Still it could desirable to be able to install Airflow on GKE from scratch for variety of reasons: some may strive to gain more flexibility, or you could be running Airflow on premises and just look for ways to quickly spin up a similar cluster in cloud to process some extra bulky data. A few years ago Google announced Composer - a fully managed Kubernetes installation of Apache Airflow, which is a great way to start automating your ETL, ML and DevOps chores using Python and Google Cloud platform. Apache Airflow is a well known workflow management platform.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed